PolyTask: Learning Unified Policies through Behavior Distillation

Abstract

Unified models capable of solving a wide variety of tasks have gained traction in vision and NLP due to their ability to share regularities and structures across tasks, which improves individual task performance and reduces computational footprint. However, the impact of such models remains limited in embodied learning problems, which present unique challenges due to interactivity, sample inefficiency, and sequential task presentation. In this work, we present PolyTask, a novel method for learning a single unified model that can solve various embodied tasks through a `learn then distill' mechanism. In the `learn' step, PolyTask leverages a few demonstrations for each task to train task-specific policies. Then, in the `distill' step, task-specific policies are distilled into a single policy using a new distillation method called Behavior Distillation. Given a unified policy, individual task behavior can be extracted through conditioning variables. PolyTask is designed to be conceptually simple while being able to leverage well-established algorithms in RL to enable interactivity, a handful of expert demonstrations to allow for sample efficiency, and preventing interactive access to tasks during distillation to enable lifelong learning. Experiments across three simulated environment suites and a real-robot suite show that PolyTask outperforms prior state-of-the-art approaches in multi-task and lifelong learning settings by significant margins.

PolyTask

Method

PolyTask operates in 2 phases - learn and distill. During the learn phase, a task-specific policy is learned for each task using offline imitation from a few expert demonstrations followed by online finetuning using RL. During the distill phase, all task-specific policies are combined into a unified policy using knowledge distillation on the RL replay buffers stored for each task.

Simulation Results

We run experiments on a total of 32 tasks across 3 simulated environment suites - Deepmind Control suite, the Meta-world benchmark, and the Franka Kitchen environment.

Multi-task Learning

Lifelong Learning

Robot Results

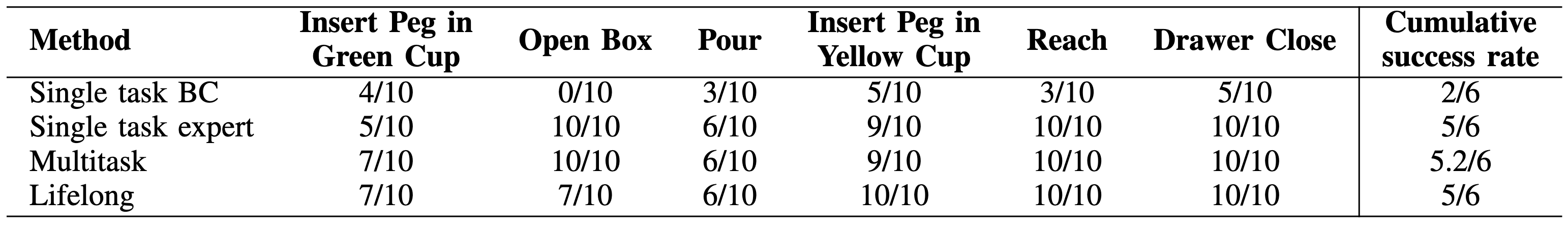

We devise a set of 6 manipulation tasks on a xArm robot to show the multi-task and lifelong learning capabilities of PolyTask in the real world.

Bibtex

@article{haldar2023polytask,

title={PolyTask: Learning Unified Policies through Behavior Distillation},

author={Haldar, Siddhant and Pinto, Lerrel},

journal={arXiv preprint arXiv:2310.08573},

year={2023}

}